Recent results from Google Quantum AI and from Oratomic, Caltech, and Berkeley have significantly refined the quantitative understanding of the quantum threat to public-key cryptography, shifting the discussion from theoretical possibility to explicit engineering resource estimates. This article analyzes these developments from first principles, distinguishing between logical and physical qubits, circuit complexity, and error-correction overhead, and examining how improvements at each layer translate into concrete estimates for executing Shor’s algorithm against elliptic-curve cryptography. Google reports that solving the elliptic-curve discrete logarithm problem on secp256k1 can be organized into circuits requiring about 1,200 to 1,450 logical qubits and about 70 to 90 million Toffoli gates, corresponding under stated superconducting assumptions to fewer than half a million physical qubits and runtimes measured in minutes. The Oratomic preprint, by contrast, proposes a specific reconfigurable neutral-atom architecture using high-rate codes and nonlocal connectivity, arguing that cryptographically relevant Shor instances could be executed with as few as 10,000 reconfigurable atomic qubits in some configurations, while a 26,000-qubit configuration could bring ECC-256 runtimes down to a few days under a 1 ms stabilizer-cycle assumption. Together, these results suggest that the relevant frontier is moving through simultaneous advances in circuit optimization, error correction, and hardware architecture, with implications extending from cryptocurrencies to the broader digital infrastructure.

Introduction

The relationship between quantum computing and modern cryptography is often described in binary and therefore misleading terms, oscillating between the assertion that quantum attacks remain a distant theoretical construct and the opposite claim that current systems are on the verge of collapse, yet neither position survives a reconstruction from first principles, because the actual question is not whether Shor’s algorithm exists or whether elliptic curve cryptography is mathematically vulnerable, both of which have been established for decades, but rather how the physical realization of quantum computation translates abstract algorithmic complexity into concrete engineering requirements, and it is precisely this translation layer that has undergone a significant refinement through recent work by Google Quantum AI, which has provided updated resource estimates for breaking elliptic curve cryptography under realistic fault-tolerant assumptions, while parallel research efforts, such as the high-rate error-correction constructions discussed in a recent preprint, suggest that these requirements may vary substantially depending on architectural choices rather than fundamental limits, because the Google work primarily refines the compiled logical resource estimate and its mapping to a fast-clock superconducting regime, whereas Oratomic primarily studies how a specific neutral-atom architecture with high-rate codes can reduce the physical overhead of fault tolerance.

The consequence is that the problem must be reframed from a static statement about vulnerability to a dynamic system in which multiple layers, including logical circuit design, error correction efficiency, and hardware architecture, evolve simultaneously, thereby compressing the distance between theoretical possibility and practical feasibility in a manner that is neither linear nor easily extrapolated from past trends.

Foundations from first principles

Physical qubits as unreliable computational substrate

A quantum computer, when reduced to its most basic physical description, is a collection of quantum systems that encode information in superposed and entangled states, yet unlike the abstract qubits of quantum algorithms, physical qubits are subject to decoherence, control noise, and imperfect gate operations, which introduce errors with non-negligible probability at every computational step, and therefore any attempt to execute a deep quantum circuit directly on physical qubits would fail with overwhelming likelihood, since the accumulation of errors grows approximately linearly with circuit depth while the tolerance for error remains exponentially small for meaningful computations.

This immediately establishes a constraint that is independent of any particular cryptographic application, namely that scalable quantum computation requires a mechanism to suppress or correct errors, which in turn implies that the physical qubit is not the appropriate unit of analysis for computational capability, because its behavior is fundamentally unstable over the timescales required by algorithms such as Shor’s.

Logical qubits and the necessity of error correction

To construct a stable computational model, quantum information is encoded into logical qubits through quantum error-correcting codes, which distribute the state of a single logical qubit across many physical qubits in such a way that errors can be detected and corrected without directly measuring the encoded information, and this encoding introduces a multiplicative overhead that depends on both the physical error rate and the desired reliability of the computation.

In practical terms, this implies that:

- a logical qubit is an abstract, error-corrected entity,

- a physical qubit is merely a component in its implementation,

and the ratio between the two is typically on the order of hundreds to thousands for surface-code-based constructions, which are the most mature and experimentally validated schemes, although alternative approaches, such as those explored in the Oratomic preprint, aim to reduce this ratio by using high-rate quantum error-correcting codes that exploit nonlocal connectivity across a code block, a choice that is central to their proposed reconfigurable neutral-atom architecture rather than a generic drop-in alternative to surface-code designs.

The total size of a quantum computer capable of executing a given algorithm can therefore be expressed as:

\text{total physical qubits} = \text{logical qubits} \times \text{encoding overhead}

which immediately shows that any improvement in encoding efficiency translates directly into a reduction in total system size, even if the logical algorithm remains unchanged.

Gate sets, non-Clifford operations, and the true cost of computation

Quantum circuits are typically decomposed into a universal gate set composed of Clifford operations, which are relatively inexpensive under error correction, and non-Clifford operations, most notably the T gate or its equivalent constructions, which are significantly more costly because they require the preparation of high-fidelity ancillary states through a process known as magic state distillation, and this process dominates both the qubit count and the runtime of large-scale quantum computations.

Therefore, the cost of executing an algorithm such as Shor’s is not determined by the total number of gates in a naive sense, but rather by a weighted combination of:

- the number of logical qubits,

- the depth of the circuit,

- the number of non-Clifford gates,

and it is precisely the reduction of this last component that underlies much of the improvement reported in the Google analysis of elliptic curve cryptanalysis, where optimized circuit constructions significantly reduce the number of required Toffoli gates, thereby lowering the overall resource requirements when mapped onto a fault-tolerant architecture.

Shor’s algorithm and the structure of cryptographic vulnerability

Shor’s algorithm provides a polynomial-time method for solving problems that are classically intractable, including integer factorization and discrete logarithms, and when applied to elliptic curve cryptography, it enables the recovery of private keys from public keys by solving the elliptic curve discrete logarithm problem, which forms the security foundation of widely deployed systems such as Bitcoin and Ethereum.

At the level of asymptotic complexity, this implies a complete break of elliptic curve cryptography in the presence of a sufficiently large quantum computer, yet this statement remains incomplete without specifying the constant factors and physical resource requirements, because polynomial time does not imply practical feasibility unless the underlying computation can be embedded into a physically realizable system.

This distinction, between mathematical vulnerability and engineering feasibility, is the central axis along which recent research has progressed, as evidenced by the updated resource estimates published by Google in its analysis of quantum attacks on cryptocurrency systems, and by alternative constructions such as those described in the Oratomic preprint, which suggest that the efficiency of error correction may be the dominant variable in determining when such attacks become practically realizable.

Layered decomposition of the problem

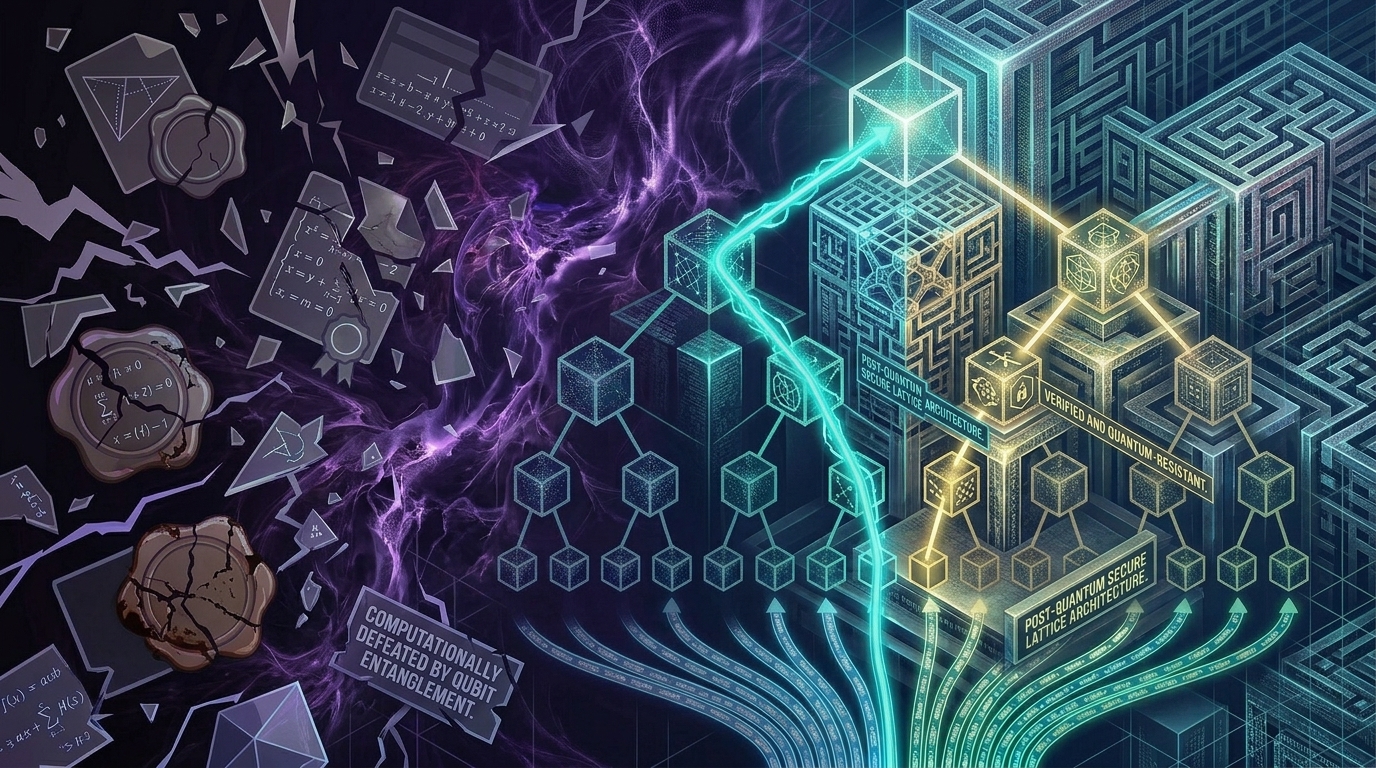

The discussion above can be summarized by decomposing the quantum attack problem into distinct but interacting layers, each of which contributes multiplicatively to the total resource requirement.

This decomposition makes explicit that improvements at any layer, whether through circuit optimization, more efficient error correction, or advances in hardware architecture, propagate through the entire stack, and therefore the feasibility of breaking cryptographic systems is not determined by a single breakthrough but by the combined evolution of multiple components whose interactions must be analyzed jointly rather than in isolation.

Revised resource estimates and circuit-level optimization

Scope and epistemic status of the result

The work published by Google, both in its research blog Safeguarding cryptocurrency by disclosing quantum vulnerabilities responsibly and in the associated technical whitepaper, must be understood precisely in terms of what it does and does not claim, because it does not introduce a new algorithm, does not demonstrate a working cryptanalytic attack, and does not alter the asymptotic complexity of Shor’s algorithm, but instead provides a refined mapping between the logical requirements of the algorithm and the physical resources needed to execute it under a fault-tolerant model, thereby reducing uncertainty in the engineering layer that separates theoretical vulnerability from practical exploitability.

A further novelty is that the authors do not publish the improved attack circuits directly, but instead publish a zero-knowledge proof that they compiled two circuits for the 256-bit ECDLP, one with 1,200 logical qubits and 90 million Toffoli gates and one with 1,450 logical qubits and 70 million Toffoli gates.

The key contribution is therefore epistemic rather than algorithmic, in the sense that it improves the precision of the cost model rather than the structure of the computation itself, yet this refinement has direct implications for risk assessment because security margins depend on quantitative thresholds rather than purely asymptotic arguments.

Quantitative results and normalization across layers

The central numerical claim of the Google whitepaper is that solving the elliptic-curve discrete logarithm problem for a 256-bit curve such as secp256k1 can be compiled into circuits requiring either no more than about 1,200 logical qubits and 90 million Toffoli gates, or no more than about 1,450 logical qubits and 70 million Toffoli gates, which, on superconducting architectures with 10^{-3} physical error rates and planar connectivity, can execute in minutes using fewer than half a million physical qubits.

To interpret these numbers correctly, it is necessary to distinguish three layers:

- logical requirement (algorithmic),

- circuit cost (gate count and depth),

- physical realization (error correction + hardware),

and to recognize that the apparent discrepancy between ~1200 qubits and ~500,000 qubits is not a contradiction but a transformation across these layers, where the latter includes the full overhead of fault tolerance.

Circuit-level sources of improvement

The reduction relative to earlier estimates should be understood as a refinement of the compiled logical attack rather than as a new asymptotic algorithm. Because the paper withholds the improved circuits under a responsible-disclosure model, the public document supports a high-level conclusion more securely than a low-level one: namely, that the authors have reduced the logical qubit and Toffoli requirements for ECC-256 substantially enough to report nearly a 20-fold reduction in physical qubits relative to prior estimates under their stated superconducting assumptions.

It is therefore safer to describe the novelty as a resource-estimation breakthrough at the logical and circuit layer than to over-specify which individual arithmetic subroutines account for the gain.

Dominance of non-Clifford gates and magic state distillation

The most important aspect of the Google result is the reduction in Toffoli gate count, because in a fault-tolerant quantum computer, each Toffoli gate requires the preparation of high-fidelity magic states through a distillation process that consumes both time and a large number of physical qubits.

This implies that:

- total runtime is heavily influenced by T/Toffoli count,

- total qubit count is influenced by the number of parallel distillation factories required,

and therefore reducing the number of non-Clifford gates has a non-linear effect on total resource requirements, simultaneously decreasing runtime, qubit count, and system complexity.

This is the primary reason why the reported improvement, often described as an order-of-magnitude reduction relative to prior estimates, emerges even though the underlying algorithm remains unchanged.

Runtime is not the limiting factor

One of the most consequential implications of the refined circuit is that, once a sufficiently large fault-tolerant quantum computer exists, the time required to execute the attack is relatively short compared to the effort required to build such a machine.

Under the superconducting assumptions stated in the Google whitepaper, namely 10^{-3} physical error rates and planar connectivity, the compiled ECC-256 circuits can execute in minutes using fewer than half a million physical qubits. This implies that, in fast-clock architectures, the dominant bottleneck is increasingly the construction of a sufficiently large fault-tolerant machine rather than the logical attack runtime itself, whereas in slower architectures such as the neutral-atom designs analyzed in the Oratomic preprint the space-time tradeoff remains more balanced, with smaller qubit counts but runtimes measured in days rather than minutes.

Structured representation of the Google resource model

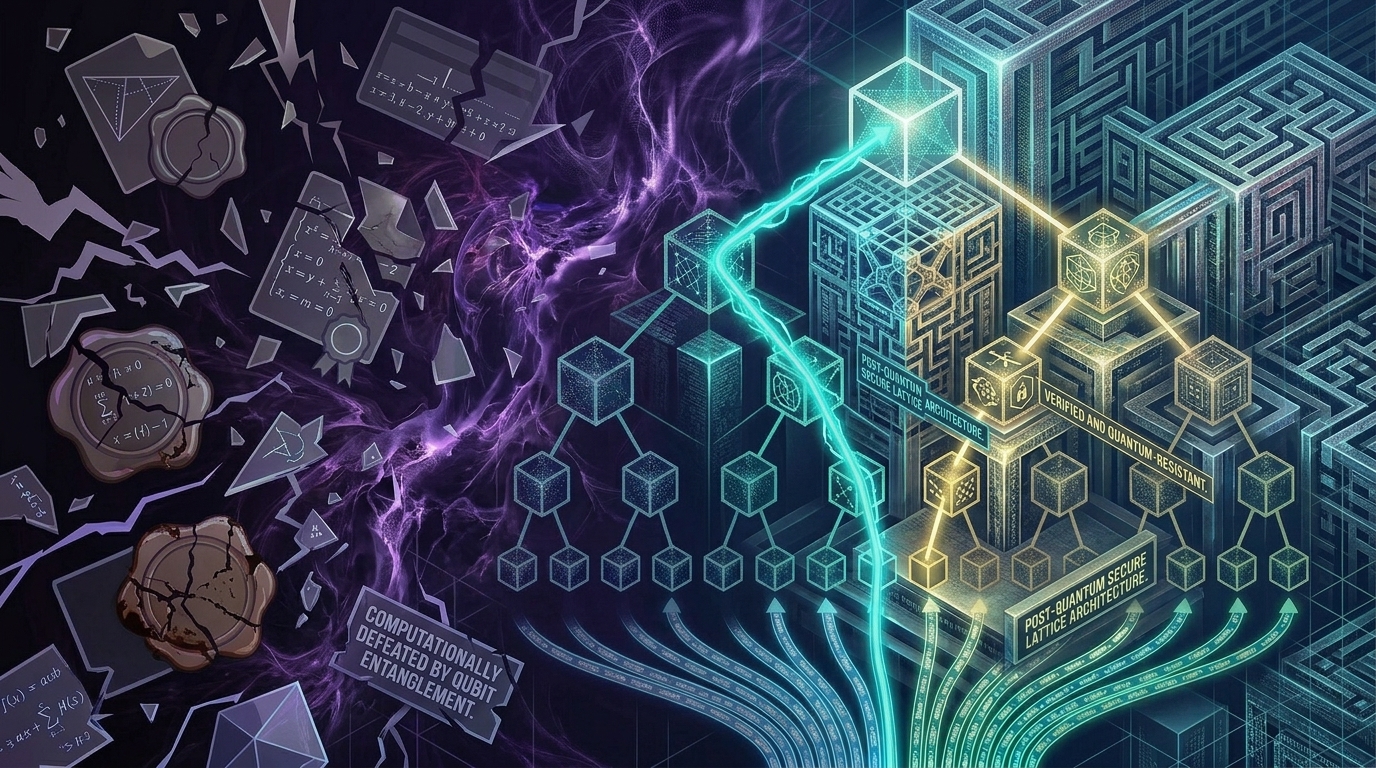

The relationship between logical requirements, circuit optimization, and physical realization can be summarized as follows:

This diagram makes explicit that the Google contribution operates primarily between the logical and circuit layers, reducing the gate count and thereby lowering the burden on the error correction layer, while the mapping to physical qubits remains dependent on the chosen error correction scheme, which is precisely the dimension along which the Oratomic approach introduces further variability.

Interpretation and limits

It is essential to emphasize that the result does not imply that current cryptographic systems are vulnerable today, because no existing quantum computer approaches the required scale or level of fault tolerance, but it does imply that the distance between current capability and required capability is smaller than previously estimated, and more importantly, that this distance is being reduced by ongoing improvements at multiple layers of the computational stack.

In this sense, the Google result should be interpreted not as a breakthrough in cryptanalysis, but as a compression of uncertainty, which forces a reassessment of timelines and migration strategies for post-quantum cryptography.

Implications for cryptocurrencies and the broader cryptographic ecosystem

Structural exposure of elliptic curve systems

Cryptocurrencies such as Bitcoin and Ethereum rely on elliptic curve digital signature schemes, most notably ECDSA and Schnorr variants, whose security is reducible to the hardness of the elliptic curve discrete logarithm problem, and therefore any entity capable of executing Shor’s algorithm at the scale described in the previous section would be able to derive private keys from public keys and produce valid signatures indistinguishable from legitimate ones, which implies a complete break of the authentication layer rather than a degradation of security.

However, the exposure is not uniform across all assets, because the attack requires access to a public key, and in many blockchain systems public keys are revealed only when funds are spent, meaning that:

- addresses that have never exposed their public key remain temporarily protected,

- addresses with reused or already exposed public keys are immediately vulnerable.

This introduces a dependency between usage patterns and security posture, which is unusual in classical cryptography but intrinsic to blockchain transaction models.

Temporal asymmetry and attack window compression

A critical implication of the optimized quantum circuits described by Google is that, once the required hardware exists, the time needed to execute an attack is relatively short, on the order of minutes to hours, which creates a narrow but decisive window between the moment a public key is revealed and the moment an attacker can derive the corresponding private key.

In the Google framing, this matters because fast-clock cryptographically relevant quantum computers could in principle support on-spend attacks against public mempool transactions in cryptocurrency systems whose transaction flow reveals spendable public keys before settlement, meaning that key recovery and fraudulent replacement would occur within the transaction settlement window. The paper therefore shifts the threat discussion from abstract eventual breakage to timing-sensitive exploitability.

ECC versus RSA: asymmetry in vulnerability timelines

A further implication, reinforced by recent resource estimates, is that elliptic curve cryptography is expected to become vulnerable earlier than RSA, not because of a difference in asymptotic complexity, but because optimized circuit constructions for elliptic curve discrete logarithms currently require fewer logical qubits and lower gate counts than comparable constructions for large-integer factorization.

This leads to a counterintuitive but important conclusion:

systems that have migrated from RSA to elliptic curve cryptography for efficiency reasons may face earlier exposure in a quantum context.

Therefore, risk models that rely on RSA-based timelines may systematically underestimate the urgency of migration for systems predominantly using elliptic curves.

Beyond cryptocurrencies: systemic cryptographic exposure

The implications extend far beyond cryptocurrencies, because elliptic curve and RSA-based primitives are deeply embedded in the global digital infrastructure, including:

- TLS protocols securing web communications,

- VPN systems and secure tunnels,

- digital identity frameworks and authentication systems,

- code signing and software update mechanisms,

and in many of these cases, the confidentiality requirement is long-lived, meaning that data encrypted today may still need to remain secure decades into the future, which introduces the well-known harvest now, decrypt later threat model, in which adversaries store encrypted data with the expectation of decrypting it once quantum capabilities become available.

Convergence of independent improvement vectors

The most important structural shift emerging from the combination of the Google and Oratomic preprints is not that a single resource number has fallen, but that distinct layers of the stack are being compressed simultaneously by different groups: Google primarily at the logical and circuit layer, and Oratomic primarily at the error-correction and physical-architecture layer, so that the novelty lies in the joint movement of multiple resource frontiers rather than in any isolated theorem-level surprise.

From a systems perspective, feasibility can be expressed as:

\text{feasibility} \approx (\text{logical complexity reduction}) \times (\text{circuit optimization}) \times (\text{error correction efficiency}) \times (\text{hardware scaling})

where:

- circuit optimization reduces gate counts (Google result),

- error correction efficiency reduces physical qubit overhead,

- hardware scaling increases available qubits and stability.

The key insight is that progress along each axis compounds, meaning that even moderate improvements at each layer can collectively produce a substantial reduction in the overall distance to a cryptographically relevant quantum computer.

Architectural heterogeneity and uncertainty

Unlike classical computing, where architectural diversity converges toward a relatively small set of dominant paradigms, quantum computing currently exhibits multiple competing architectures, including superconducting circuits, trapped ions, photonic systems, and neutral atom arrays, each with distinct trade-offs in terms of connectivity, gate speed, error rates, and scalability.

This leads to fundamentally different feasibility profiles:

- high-connectivity systems may reduce error correction overhead but operate at lower clock speeds,

- fast-clock systems may achieve shorter runtimes but require larger qubit counts,

and therefore the timeline for practical cryptographic attacks is not determined by a single trajectory but by the interaction between these competing paradigms.

Google explicitly frames this contrast as one between fast-clock and slow-clock architectures, a distinction that helps explain why a superconducting machine with more qubits can still be more operationally dangerous for short-window attacks than a smaller but slower neutral-atom machine.

System-level representation of convergence

The interaction between these layers and architectural choices can be represented as follows:

This diagram highlights that feasibility is reached when the required capability, reduced by improvements in circuit design and error correction, intersects with the available capability provided by hardware scaling, and that both sides of this equation are evolving simultaneously.

Strategic implications: from cryptography to architecture

The final implication is that the transition to post-quantum cryptography is not merely a matter of replacing one algorithm with another, but a broader architectural transformation that requires:

- inventorying cryptographic dependencies across systems,

- enabling crypto-agility to support multiple algorithms concurrently,

- deploying hybrid schemes that combine classical and post-quantum primitives,

- coordinating migration across distributed and long-lived infrastructures,

and therefore the problem shifts from pure cryptography to systems engineering and governance, where the primary challenge is not the design of secure primitives, which already exist in standardized form, but the controlled and timely integration of these primitives into complex, interdependent systems.

Conclusion of the impact analysis

Taken together, these considerations show that the significance of recent advances lies not in the immediate feasibility of quantum attacks, but in the compression of the uncertainty space, which reduces the margin for delayed action and increases the importance of proactive migration strategies, because the intersection between theoretical vulnerability and practical exploitability is no longer a distant abstraction but a moving boundary whose trajectory is shaped by multiple interacting technological trends.

From theoretical vulnerability to architectural inevitability

Reframing the problem

When reconstructed from first principles, the question of quantum impact on cryptography is not whether existing public-key systems are mathematically secure, because that question has already been answered in the negative by the existence of Shor’s algorithm, nor is it whether a large-scale quantum computer exists today, because it clearly does not, but rather how the evolving relationship between algorithmic requirements and physical realizability determines the point at which theoretical vulnerability becomes operationally exploitable.

Recent work by Google has reduced uncertainty on the required-capability side by refining the logical resource estimates for ECC-256 and mapping them into a superconducting physical regime, while the Oratomic preprint argues that, within a specific reconfigurable neutral-atom architecture using high-rate codes and nonlocal connectivity, the physical overhead of fault tolerance may be dramatically smaller than surface-code-centric discussions had implied. These are not interchangeable estimates, but they do jointly narrow the space of plausible futures.

The result is that the problem must be understood as a dynamic convergence process, not a static threshold.

The collapse of single-trajectory thinking

Historically, many discussions of quantum risk relied on a single broad intuition, usually surface-code-centric and often summarized as requiring very large, plausibly million-scale machines, whereas the new preprints show that explicit resource estimates now depend much more sharply on architectural and coding assumptions than that shorthand suggests.

The emerging evidence invalidates this assumption, because multiple architectural equilibria are now plausible:

- high-redundancy, fast-clock systems with large qubit counts,

- lower-redundancy, high-connectivity systems with smaller qubit counts but longer runtimes,

and these equilibria correspond to different points in a multi-dimensional design space defined by:

- connectivity constraints,

- error correction overhead,

- gate fidelity and speed,

- system integration complexity.

Therefore, the relevant question is no longer how many qubits are needed in a universal sense, but:

under which architectural assumptions, and with which trade-offs, a cryptographically relevant quantum computer becomes feasible.

Compression of uncertainty as the primary signal

The most important shift introduced by recent results is not a dramatic reduction in a single parameter, but the simultaneous tightening of bounds across multiple layers, which reduces the uncertainty that previously separated theoretical possibility from engineering plausibility.

In the Oratomic paper, that increase in experimentally relevant capability is not treated abstractly but tied to concrete neutral-atom advances including below-threshold operation, universal fault-tolerant processing on up to 500 physical qubits, and trapping arrays exceeding 6,000 qubits, even though the authors are explicit that integrating these into a full Shor machine still requires substantial engineering development.

This can be expressed as a convergence between two evolving functions:

- required capability, which is decreasing due to circuit optimization and improved error correction,

- available capability, which is increasing due to hardware scaling and experimental progress,

and the intersection of these functions defines the feasibility threshold.

This representation emphasizes that no single breakthrough is required; rather, incremental improvements across multiple layers collectively move the system toward feasibility.

Implications for risk and decision-making

From a risk perspective, the expected impact of quantum computing on cryptographic systems is already maximal, because the successful execution of Shor’s algorithm would completely break widely deployed primitives, and therefore the only variable that remains uncertain is the time horizon, which is itself becoming more constrained as estimates improve.

This leads to a critical asymmetry:

- the cost of early migration is bounded and largely known,

- the cost of delayed migration, if the threshold is crossed unexpectedly, is unbounded and potentially systemic,

and therefore rational decision-making must be based not on certainty of timelines, which is unattainable, but on the recognition that the variance of the timeline is decreasing, which increases the probability that the transition occurs within the planning horizon of current systems.

From cryptographic primitives to system architecture

The final and perhaps most consequential implication is that the problem is no longer primarily cryptographic, because post-quantum algorithms have already been standardized and are available for deployment, but architectural, in the sense that real-world systems must be:

- inventoried to identify cryptographic dependencies,

- redesigned to support crypto-agility,

- migrated through hybrid states that combine classical and post-quantum primitives,

- coordinated across organizational and jurisdictional boundaries.

This transforms the challenge from one of mathematical security to one of system integration, governance, and lifecycle management, where the difficulty lies not in defining secure algorithms but in ensuring their consistent and timely adoption across complex and interdependent infrastructures.

Final synthesis

Taken together, the current state of the field can be summarized as follows:

- the mathematical vulnerability of current public-key cryptography is established,

- the logical requirements for exploiting this vulnerability are increasingly well understood,

- the physical realization of these requirements is no longer usefully described by a single surface-code-centric paradigm,

- different groups are reducing different coordinates of the same resource frontier, with Google tightening the compiled logical resource estimate for ECC and its mapping to a fast-clock superconducting regime and Oratomic tightening the physical-overhead estimate for a specific neutral-atom architecture,

and therefore:

the transition from classical to post-quantum cryptography should be understood not as a reaction to a future breakthrough, but as an ongoing adaptation to a system whose feasibility boundary is already moving toward the present.

This reframing shifts the discussion from speculative forecasting to active architectural planning, which is the appropriate domain for addressing a risk that is both inevitable in principle and increasingly bounded in practice.

Yes. Add a new section like this, without changing the rest of the article:

What these results change relative to the trust-expiration thesis

In my earlier article When Digital Trust Expires: Quantum Computing and the Collapse of Signature-Based Security, the central claim was that the real post-quantum problem is not merely the future loss of confidentiality, but the structural expiration of digital trust itself: signatures, trust anchors, and long-lived assertions do not fail visibly when cryptographic hardness decays, but continue to verify even after the assumptions that once justified their authority have collapsed. That earlier argument treated the threat primarily as a systems and governance problem, centered on delayed forgery, silent impersonation, abuse of long-lived trust anchors, and retroactive supply-chain compromise. The Google and Oratomic preprints do not overturn that thesis. They strengthen it by narrowing the quantitative uncertainty around the engineering path to cryptographically relevant attacks. In other words, what was previously a structural argument about the eventual expiration of signature-based trust is now reinforced by sharper resource estimates showing that the distance between theorem-level vulnerability and implementable attack is smaller, and more architecture-dependent, than many discussions had assumed.

More specifically, the earlier article argued that digital signatures are trusted in a timeless and binary way, while cryptographic hardness is not timeless, and that this asymmetry makes long-lived signed artifacts, firmware, software updates, certificates, and administrative trust anchors latent liabilities once sufficiently capable quantum attackers emerge. The Google whitepaper materially sharpens that claim for elliptic-curve systems by reporting compiled ECC-256 attack circuits with about 1,200 to 1,450 logical qubits and about 70 to 90 million Toffoli gates, together with a superconducting mapping that yields runtimes measured in minutes under stated assumptions. The Oratomic preprint sharpens the same thesis from a different direction by arguing that, within a specific reconfigurable neutral-atom architecture based on high-rate codes and nonlocal connectivity, the physical overhead of such attacks may be dramatically smaller than surface-code-centric intuitions suggested. Read through the lens of the earlier article, these results do not merely say that signatures will eventually become forgeable in principle; they say that the engineering frontier is now moving in ways that make the collapse of signature-based trust a more concrete planning problem rather than a distant abstraction.

There is also an important refinement in timing. The earlier article emphasized delayed forgery and the retroactive erosion of authenticity, integrity, and evidentiary value. That remains correct, but the Google paper adds a sharper possibility for some cryptocurrency systems: in fast-clock architectures, the threat is no longer limited to store now, forge later, because on-spend attacks against public mempool transactions become thinkable once cryptographically relevant machines exist. This does not invalidate the trust-expiration framework; rather, it extends it. The previous thesis can now be restated more precisely: quantum risk to signature-based trust has at least two temporal modes, one retroactive and archival, where old signatures and long-lived trust anchors become unreliable over time, and one contemporaneous, where some high-value transactions may become vulnerable within their operational settlement window. The qualitative structure of the problem is the same, but the attack surface is now more temporally diverse than a purely archival formulation suggests.

The governance consequence is therefore stronger than before. In the earlier article, I argued that post-quantum remediation should not be treated as a future cryptographic upgrade, but as a continuous security program centered on shortening trust lifetimes, reducing what signatures authorize, partitioning authority, preserving non-cryptographic provenance signals, and designing systems so that cryptographic failure does not automatically entail total institutional failure. The Google and Oratomic results do not change those prescriptions; they increase their urgency and make them less speculative. If the earlier article established that digital trust built on classical signatures is structurally perishable, the present article adds that the resource frontier is moving quickly enough that organizations should stop treating trust expiration as a remote possibility and instead treat it as an active architectural constraint on how long signatures, roots, certificates, signing keys, and authenticated artifacts may safely be relied upon.

See also longforms

Controllo e Monitoraggio della Rete: la Nuova Postura di Sicurezza degli Impianti Connessi

Gli aggiornamenti Terna agli Allegati A.13, A.69 e A.52 nel quadro europeo di resilienza cyber, NIS2, CRA e Perimetro di Sicurezza Nazionale Cibernetica

Measuring Cyber Risk in the Italian Corporate Sector

A Banca d’Italia indicator of cybersecurity vulnerability designed to support creditworthiness evaluation

The December 2025 Cyberattack on Poland’s Energy Sector

A detailed reconstruction of the incident, the malware, and the wider significance for energy and OT security

Reclaiming the Namespace: EU Digital Sovereignty in the CVE Ecosystem?

Rebalancing coordination and autonomy: EU digital sovereignty, federated CVE governance, and the conditions for sustainable economic growth

Quantum-Safe HTTPS Certificates: Google’s Structural Innovation, Technical Foundations, and Governance Implications

Engineering quantum resistant web authentication through Merkle commitments, transparency logs, and scalable trust infrastructure

Encrypted Cloning and the Exact Boundary of the No-Cloning Theorem

Redundancy without replication in quantum information processing

Back to top